Chapter 6: How to Lead Bots¶

Leading bots effectively requires a specific playbook: Know, Assemble, Guide, and Align.

Know Them¶

This is the heart of the discipline. And it's the most challenging technical aspect. I have allocated a Chapter of this book as your primer.

Every component of every bot contributes to its overall capabilities. To reach a gestalt understanding of the teammate, you must know the pieces.

Think of it like knowing a bit of psychology so that you can better relate to other people. But we rarely have the benefit of asking "How would I feel if...?"

A Bot is an alien intelligence.

Analogies are useful, but we must also sometimes wrangle with the true nature of bots, as engineers and technicians, in order to relate to them appropriately.

A Bot has a world model, as I described earlier.

You only have to know them well enough to make good decisions regarding them. There's more depth in this book than you really need, but it's also insufficient because the world is changing fast. You have to maintain currency.

It may be useful to classify bots. Bots with bodies vs without. Bots that are creative vs mostly analytical.

Assemble Them¶

We have learned that Bots can be LLM brains equipped with tools, physical robots sharing our space, or composites of digital and physical embodiments. They are machines that function like people.

Assembly requires a willingness to hire and fire. Just like with people, there is a cost associated with both adding and removing bots, but both actions must always be on the table. The benefits and penalties associated with hiring decisions are the similar, but the volatility of the hiring pool is magnitudes greater; Every dimension of a "good hire" is in constant disruption, with waves of new software techniques, new hardware capabilities, new vendors, and so on.

Buy Vs DIY¶

Open source is the only way to really know what's going on with a computer program. Fortunately, there are a lot of smart and good people who understand that and are making efforts to grant the world the privilege of owning their bots.

There's a game I play called Blood on the Clocktower, where one group of people is good and the other evil. The good players don't know anyone's alignment (evil does, but they're outnumbered.) A character called the marionette is evil, but doesn't know it. The character might not be in the game, but the possibility that for example you, a good player, might be mistaken about your own alignment dramatically changes how you play. Even though the mechanical effect of the marionette is minimal, its potential presence in the game alters how everyone plays and therefore has a large true impact. I think of open source this way. The fact that you can walk away from big tech, and their secret AI models, will induce them to behave better, even if not a lot of us are on open source.

To be sure, big tech is not all bad when it comes to open source. Most big tech companies are leaning into open source in some capacity. There are open models

So consider open source. This is one of those topics where I could fill pages evangelizing something that's probably not going to shift your position much, so I'll wrap it up: You have more power if your software is free (as in freedom) and open. Have you heard the good word of the Linux?

Along those lines, consider your own private source. The fact that coding has become the purview of AI, primarily, means that it's much more accessible. You can make the software do what you need by making it yourself, and you'll then control the source code because you wrote it.

Making physical robots is hard. I've done it as part of the US Govt (read, lots of money) and with my animatronics startup (read, very little money). They're both hard. In fact, I got into simulations work largely because I could keep exploring ideas and building things without buying and fabricating things (Lots of my simulations were essentially character robots in a game engine.) So while I'm going to touch on some DIY for robots, I want it to be on the foundation of this basic understanding that it's not an easy road.

Bot Aesthetics¶

If you had to work alongside one of two people that performed their job tasks exactly the same, but one of them was horrific to look at, and the other beautiful, which would you choose?

If you your choice was between a calm and jovial spirit and a grumpy jerk, which then?

Aesthetics matter.

All living things evolved for their environment, and there is good evidence that humans evolved literally for the environment of other humans. It's a remarkable social insight.

Bots, as thinking systems which we can't help but personify, will be our environment.

When I was building army bots, we actually studied how to make our machinery look scary! It is an effective contribution to your overall warfighting force when your opponents are intimidated by the very sight of you. Surely, this insight has led to everything from knights in shining armor to the Jolly Roger to Drone "Fireworks" shows.

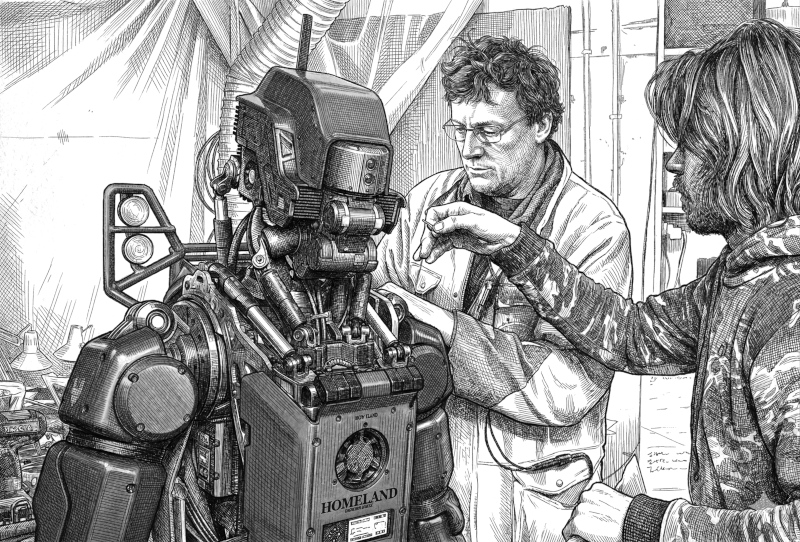

When I was building animatronic creatures for The Hobbit and other films, it was entirely aesthetics. The goal was to get good visuals on film, and that leads to simple directives: Look right. Act right. Designing and engineering animatronic characters brought me to the realization that All robots have an aesthetic component. It is unavoidable. You can not build a robot that operates around people without affecting those people on aesthetic grounds.

Be responsive to the fact that aesthetics are in play. If you're integrating a chef robot, and it's great at cutting vegetables, but looks a bit like a stab-crazy Terminator, you would be remiss to prioritize faster movement.

"Programmer Art" is a phrase from game development that describes visual assets produced by the person trying to get the blasted thing to just work, who doesn't really have the skill or care to make it look nice. Aesthetics are not top priority for most programmers. The problem is, of course, when the bar for aesthetics is set too low and programmer art becomes final product. I think the idea intuitively extends to other domains. Industrial designers can narrow their minds into making everything look like an iphone. And it was architects, after all, who gave us brutalism. So be aware of programmer art in bots. Be aware of brutalist bents of designers.

Allow yourself to listen to your intuition and your desires for a better environment to guide your Bot creation and Bot selection.

In 2014 I published a paper in The Journal of Human-Robot Interaction on the aesthetics of robots. I was interested in the visual design of robots to make them more pleasant to work alongside.

I presented several principles that I think are still valid: Things like moving toward substantive bodies rather than skeletal frames, and clear, exaggerated expressiveness (which avoids the uncomfortable mystery of what this alien intelligence is thinking). My exemplar for a good robot design was Kermit the Frog. Not a robot, but the cuddly fabric enshrouding Jim Henson's hand.

You can dress up and decorate a robot. Put googly eyes on it. Give it a good name.

If you are engineering a Bot from a more fundamental level you can incorporate shape languages (round means safe, and pointy means dangerous!).

Much of what applies to physical robots apply to purely digital Bots. After all, digital Bots can have avatars.

I came up with particular look I'm fond of.

This captures the alien intelligence, the strange quality of drifting in and out of existence (when it's working vs halted), and the hat is the simplest communiqué of "role".

I don't mind if you borrow it, but I'd certainly recommend you create what what works for you. A bot's appearance is an expression of your vision as a leader.

A bot's personality is also part of its aesthetics. Remember that grump you decided to not hire? Well, don't hire a grumpy bot either.

At the moment, the most straightforward way to achieve the personality you want in a Bot is to have a PERSONALITY file in your context.

Here's a quick example:

Personality: You are nice.

The look and feel of a Bot also signals what it does.

In design, this is called affordance.

A simple example of this is a doors. If one side of the door has a handle but the other doesn't, the non-handle side is the push side.

Delegate¶

Organizational Charts with Bots¶

With variety of bots you differing approaches and knowledge bases, diverse thought, and the potential for conflicts that can lead to growth and optimal outcomes.

However, a variety of bots with which you interact creates more complexity in your environment. It might be most convenient to have just one assistant, for example.

Your org chart can be shaped and reshaped to your liking.

you work with one bot. any agent will typically have other agents that works with, often creating them on the fly

mindmap

(("👤<br>You"))

["🤖<br>Bot"]

["🤖<br>Sub-Bot"]

["🤖<br>Sub-Bot"]you work with many bots

mindmap

(("👤<br>You"))

["🤖<br>Bot A"]

["🤖<br>Bot B"]

["🤖<br>Bot C"]you work with one or more Bot Leaders (ie, people). Still useful for you to know about Bot Leadership so you can interact with them better. I'm not covering how to work with people much in this book because it's thoroughly covered elsewhere.

mindmap

(("👤<br>You"))

(("👤<br>Bot<br>Leader"))

["🤖<br>Their Bot(s)"]

(("👤<br>Bot<br>Leader"))

["🤖<br>Their Bot(s)"]

(("👤<br>Bot<br>Leader"))

["🤖<br>Their Bot(s)"]you will work with your bots and other bots (ie, big tech's) and your bots will have bots and you'll work with other bot leaders who have their own similar trees

mindmap

(("👤<br>You"))

["🤖<br>Your Bot(s)"]

["🤖<br>Sub-Bot"]

["🤖<br>Big Tech<br>Bot"]

(("👤<br>Other Bot<br>Leader"))

["🤖<br>Their Bot(s)"]Guide Them¶

There's an infamously dreaded question for creatives (writers, comics, makers, etc.):

Where do you get your ideas?

It's too reductive, abstract, and just difficult to respond to with any accuracy. The comedian Norm Macdonald even had a recurring bit on his talk show where his hapless sidekick would "earnestly" put it to their guest just to mess with them.

Some questions are simply not going to take the conversation anywhere interesting.

People who don't work deeply with Bots often ask this question:

What work did you do and what did the AI do?

Well, let me try to explain.

Have you ever created new music with a group of friends? Have you ever sat at a writer's table? Have you ever stood on an improv stage with a cast of on-the-spot characters and tried to build a scene?

Those are the purest forms I can think of, but I'll stretch the idea a bit:

Have you ever played charades? Have you ever simultaneously co-written a document? Have you ever had a good meeting?

In these scenarios, somebody throws out a half-formed sentence and somebody else catches it wrong -- hears something the speaker never meant -- and that mishearing sparks a "yes, and" that pivots the whole room into territory nobody planned but everybody suddenly recognizes. You're reacting to something that was a reaction to your own throwaway line from three minutes ago, except your partner bent it just enough that now you're seeing a third thing you hadn't considered until the words were already out of your mouth. Meanwhile someone across the circle just shifted their weight and that changed the energy and now the thing you were about to say doesn't fit anymore so you say something else entirely and that lands, and the person next to you riffs on it before you even finish, and their riff reminds you of the original idea but it's mutated now, unrecognizable, better. A mistake becomes a hook. Your original intent gets swallowed by the momentum of what things are becoming.

That is what "group flow" feels like. You are in it. You are co-creating with other minds, and to piece together how exactly things happened afterwards is both absurdly difficult and not very interesting.

Instead of asking the question "How exactly did the thought work go down," I'd suggest getting into a group flow with AI. Then your answer to the question would be, "It was probably similar to what it was like when I did it," which I think is the most informed one could be on the subject.

Your role as Bot Leader is to guide the work toward your vision, no matter the form or source of the work being done.

Guidance is active. Remember that this is the worst AI will ever be; Bots grow, learn, and evolve. You shepherd that growth toward your desired outcome.

Communicate your vision. If a bot is not doing what you want or expect, it's most likely going to be worthwhile to course-correct. Something as simple as "You are doing X, but I want you to do Y," is remarkably effective. Any knowledge of your bot's inner working will help you guide it. For example, this is a common debugging pattern: "I don't like that you did X. Please identify if there is anything in your context that helped bring it about." Once the problem is identified, you could move to "Give me a corrective action plan" — That is, if the agent hasn't solved the problem proactively.

Personal Development for Bots.

Keep Them Aligned¶

The vision of the leader becomes reality; leadership is all about alignment. By leading bots, we help solve the AI alignment problem at a practical level. You express your principles through your actions and your directives to subordinates. If you successfully assemble, know, and actively guide your bots, you have fundamentally solved alignment for your team.

Clearly, if one accepts the depth of challenge the alignment problem presents, just doing a good job of leading Bots will not solve the alignment problem. I talk about "alien intelligence" because the exact mechanisms clicking through the mind of an AI as we interact with it are as mysterious as another person, but without the benefit of similarity to the inside view of your own. There are efforts underway to establish software guardrails, legal regulation, and other means of making AI "good," and those things will probably steer our ship in the right direction, but the environment of Bots you lead is entirely in your sphere of influence. And, like always, the actions of individuals and small teams are what aggregates into the movements of societies.

Act on principle, and make sure your Bots do, too.

Nick Bostrom, in Superintelligence, breaks the alignment problem into Motivation Selection and Capability Control. His Motivation Selection is about getting the AI to want the right things. He proposes ideas like Coherent Extrapolated Volition (programming a mind to pursue what we would want if we were wiser) and Corrigibility (designing it to accept correction rather than resist shutdown).

While I cover some ideas for those building the foundations, it's not the core of what a Bot Leader does. However, the principles and approaches are effectively a motivation selection framework.

When you Own the Culture, you are doing value alignment. You define what your Bots optimize for. You set the tone, the boundaries, the priorities. We should not expect the foundational engineers to give Bots an agenda pulling them away from your influence. I've highlighted Open Source , which is our best tool for verifying these trustable foundations.

Know What Your Bots Can Do¶

Bostrom's Capability Control is about restricting power: boxing, tripwires, stunting, incentive methods. Keep the AI contained until you're confident in its alignment.

You are already doing a version of this, or you should be.

Remember the world model from Chapter 4. Everything a Bot does, it does based on its internal model. That model has blind spots. Knowing those blind spots is capability control.

Your Part in the Bigger Picture¶

Bostrom also stresses global coordination. International treaties to prevent a race to the bottom where safety is sacrificed for speed.

I doubt you're negotiating treaties. But you are part of the aggregate.

I said in Chapter 2 that I don't see a future where humans have much impact without a loyal team of Bots. That cuts both ways. If you and your Bots are operating on principle, that's a node of aligned AI in the world. If enough nodes exist, we have a culture of alignment, which carries more weight than policy, anyway.

Implementation Specifics¶

While this is one of the more technical chapters, I have intentionally avoided going deep into details about the technical execution of the ideas. In rapid technological change, a static object like a book is not going to be a resource for the latest and greatest.

If you want to know how to execute, I'd recommend this: Talk to a Bot.

With that disclaimer, I will suggest a few things...